Anthropic vs The Pentagon: When AI Says No to War Without Limits

The Anthropic Pentagon clash over AI ethics: a $200M contract lost, a supply chain risk label, and the battle over autonomous weapons and mass surveillance.

FIRST PAGEAIDIGITAL CULTURE AND PHILOSOPHYINNOVATION AND EMERGING TECHNOLOGIES

Anthropic vs The Pentagon:

When AI Says No to War Without Limits

"These threats do not change our position: we cannot in good conscience accede to their request." - Dario Amodei, CEO of Anthropic

On Friday, February 27, 2026, at 5:01 PM Eastern Time, an ultimatum expired. Not between warring nations, not between nuclear powers on opposite sides of a border. Between the most powerful Defense Department in the world and a San Francisco company that builds artificial intelligence.

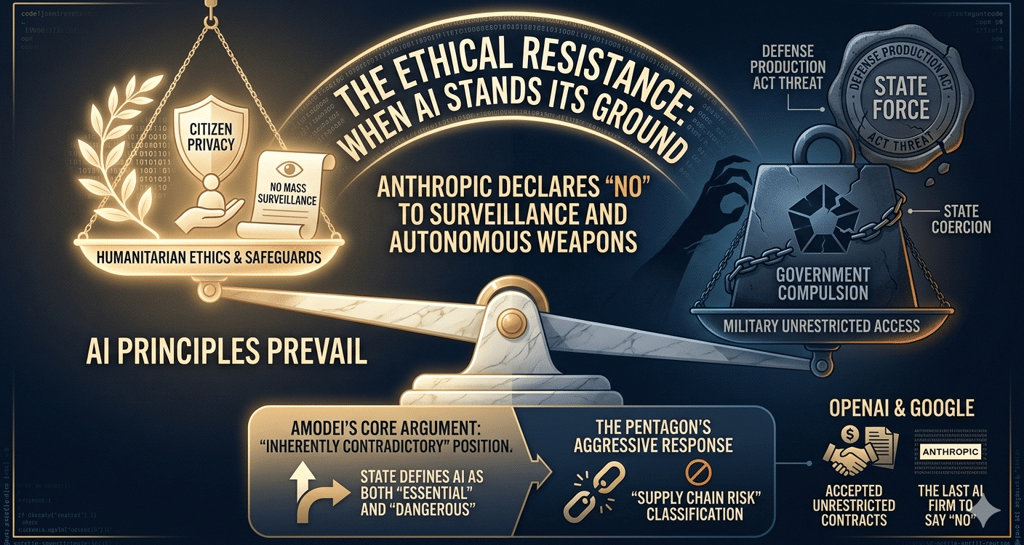

On one side, Defense Secretary Pete Hegseth, demanding unlimited access to Claude hhh, the most advanced AI model ever deployed on the Pentagon's classified networks. On the other, Dario Amodei, CEO of Anthropic, refusing to remove just two clauses from the contract: the prohibition on using his AI for mass surveillance of American citizens and for fully autonomous weapons.

The government's response? An escalation without precedent in the history of the relationship between Silicon Valley and the military-industrial complex.

Trump ordered all federal agencies to immediately cease using Anthropic's products. Hegseth designated the company a "supply chain risk to national security," a label reserved until now for foreign adversaries like Huawei and Kaspersky, never before applied to an American company. And just hours later, OpenAI announced it had secured exactly the Pentagon classified network contract that Anthropic was losing.

This is not merely a contractual dispute. It is the moment when the question we've carried since the dawn of the digital age, who controls technology: those who build it or those whhhhho use it?, stopped being theoretical.

1. The Facts: Anatomy of a Showdown

1.1 The $200 million contract

In July 2025, Anthropic signed a contract worth up to $200 million with the U.S. Department of Defense. A historic deal: Claude became the first frontier AI model deployed on the Pentagon's classified networks, beating Google's Gemini and OpenAI's ChatGPT in the race for security clearance.

The scope was broad: intelligence analysis, modeling and simulation, operational planning, cyber operations. Claude was used in concrete operations, according to Axios, including the operation to capture former Venezuelan president Nicolás Maduro.

But the contract contained two clauses in Anthropic's Acceptable Use Policy that would change everything: a prohibition on use for mass surveillance of American citizens and for fully autonomous weapons, those that select and engage targets without any human intervention.

1.2 The ultimatum

In early 2026, the Pentagon, rebranded by the Trump administration as the "Department of War," reverting to the pre-1947 name, established a new policy: all AI providers must make their models available for "all lawful purposes," with no company-imposed exceptions.

For Anthropic, this meant removing the two clauses. The company refused.

On February 24, during a meeting at the Pentagon between Secretary Hegseth and Amodei, described by sources as "cordial," Hegseth delivered an ultimatum: accept by 5:01 PM on Friday, February 27, or face consequences.

The threatened consequences were threefold, and all unprecedented:

Cancellation of the $200 million contract

Designation as a "supply chain risk", a label that would require every military contractor to certify they do not use Anthropic products

Invocation of the Defense Production Act, a 1950 law, designed for wartime emergencies, that allows the president to compel private companies to provide products to the government

As Amodei noted in his public statement on February 26, these latter two threats are "inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security."

1.3 Anthropic's response

On February 26, Amodei published a statement on Anthropic's website that deserves close examination, because it is a rare document: a Silicon Valley CEO publicly choosing to lose hundreds of millions of dollars rather than yield on ethical principles.

Amodei listed the company's patriotic credentials: first AI company to deploy models on the government's classified networks, first at the National Laboratories, first to provide custom models for national security customers. He recalled foregoing hundreds of millions in revenue by cutting access to Claude for companies linked to the Chinese Communist Party. He emphasized never raising objections to specific military operations.

But on the two clauses, no compromise. The reasoning, according to Amodei, is twofold:

On mass domestic surveillance: AI makes it possible to automatically and at massive scale assemble scattered, individually innocuous data (geolocation, web browsing history, personal associations) into a comprehensive picture of any person's life. The government can already legally purchase such data from public sources without a warrant, a practice that the Intelligence Community itself has acknowledged raises privacy concerns.

On fully autonomous weapons: current frontier AI models are simply "not reliable enough" for use in weapons that completely remove humans from the decision loop. Anthropic offered to collaborate directly with the Pentagon on research to improve reliability of such systems, but the offer was declined.

Overnight between February 26 and 27, the Pentagon sent Anthropic what it called its "best and final offer." Anthropic rejected it, stating the new language "made virtually no progress" on the two clauses and that new formulations presented as compromise "were paired with legalese that would allow those safeguards to be disregarded at will."

1.4 The retaliation

On Friday, February 27, about an hour before the deadline expired, Trump wrote on Truth Social:

"The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic's technology."

He allowed six months for transition. He added that Anthropic "better get their act together during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow."

Shortly after the 5:01 PM deadline, Hegseth posted the coup de grâce on X:

"In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

And then: "America's warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final."

That same day, Emil Michael, Under Secretary of Defense for Research and Engineering and former Uber executive, wrote on X that Amodei "is a liar and has a God-complex" who "wants nothing more than to try to personally control the U.S. Military."

A detail revealed by Axios makes the scene even more surreal: Michael was still on the phone with Anthropic offering a deal at the very moment Hegseth tweeted the supply chain risk designation. The deal Michael was proposing would have required allowing the collection and analysis of data on Americans, from geolocation to web browsing history to personal financial information purchased from data brokers.

1.5 Anthropic fires back

On the evening of February 27, Anthropic released a second statement in which it:

Called the designation "an unprecedented action, historically reserved for US adversaries, never before publicly applied to an American company"

Declared the move "legally unsound" and that it "would set a dangerous precedent for any American company that negotiates with the government"

Announced it would challenge the designation in court

Contested Hegseth's legal authority to bar all military contractors from doing business with Anthropic, specifying that the law (10 USC 3252) limits the designation "to the use of Claude as part of Department of War contracts - it cannot affect how contractors use Claude to serve other customers"

Reassured individual and commercial customers that their access to Claude is "completely unaffected"

One passage deserves special attention: "No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons."

2. OpenAI Enters the Stage (at the Perfect Moment)

Hours after Anthropic was designated a national security risk, on the evening of Friday, February 27, OpenAI CEO Sam Altman announced on X that his company had reached an agreement with the Pentagon to deploy its models on classified networks, filling exactly the void left by Anthropic.

The timing was surgical. But the truly interesting part is in the details.

Altman declared that the Pentagon agreement contains the same two limitations that Anthropic had built its battle around: no mass surveillance of American citizens, no autonomous weapons. He wrote: "Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The Pentagon agrees with these principles, reflects them in law and policy, and we put them into our agreement."

How is it possible that the Pentagon accepts from OpenAI exactly what it refused from Anthropic?

The difference, according to Fortune, lies in the contractual language. Anthropic sought to insert the prohibitions explicitly in the contract as binding clauses. OpenAI instead agreed that the Pentagon could use its technology for "any lawful purpose," while simultaneously declaring it had "put" the two limitations into the agreement, apparently through a different mechanism.

In essence: the same "red lines" as Anthropic, but expressed in language that allows the Pentagon to say it obtained "unlimited access for all lawful purposes." The difference is subtle and likely strategic: Altman closed his message by asking the Pentagon to "offer these same terms to all AI companies," suggesting Anthropic could have accepted this formulation too.

A fundamental ambiguity remains: if American laws already prohibit mass surveillance and fully autonomous weapons, why did the Pentagon refuse to put it in writing in its contract with Anthropic? The answer, suggested by Axios, may lie in what Emil Michael was proposing over the phone: a deal that would have included the collection of Americans' personal data (geolocation, web browsing history, financial data) purchased from data brokers. Technically legal activity. But which an AI could transform into de facto mass surveillance.

3. Why This Story Is Unprecedented

3.1 The first American company branded as "enemy"

The supply chain risk designation is a tool created to protect the military supply chain from infiltration by hostile governments. All precedents involve companies linked to adversarial powers: Huawei, banned by the FCC in 2020 for ties to the Chinese government; Kaspersky Lab, banned by the Commerce Department in 2024 for ties to the Russian government.

Anthropic is an American company, founded in San Francisco, valued at $380 billion, having just closed a $30 billion funding round. It is backed by investors including Google and Amazon. It voluntarily cut revenue by blocking Chinese access to its models. It deployed its AI on the U.S. military's classified networks.

As Charlie Bullock, senior research fellow at the Institute for Law & AI, wrote, the government cannot apply this designation without completing a risk assessment, which does not appear to have occurred, and without notifying Congress before taking action, which also does not seem to have happened. Amos Toh, senior counsel at NYU's Brennan Center for Justice, added that the statute requires demonstrating a risk of sabotage, subversion, or manipulation by an adversary: "It is not at all clear how adversaries could exploit Anthropic's usage restrictions on Claude to sabotage military systems."

3.2 The market ripple effects

If the designation is applied in its broadest interpretation, Hegseth's, not Anthropic's, the consequences would be devastating. Every Fortune 500 company with Pentagon contracts would need to stop using Claude. This potentially includes companies like Amazon, Google, and NVIDIA, which have invested billions in Anthropic and could find themselves forced to divest.

As independent analyst Shenaka Anslem Perera wrote: "It will take years to resolve in court. And in the meantime, every general counsel at every Fortune 500 company with any Pentagon exposure is going to ask one question: is using Claude worth the risk?"

3.3 The Defense Production Act paradox

The threat of invoking the Defense Production Act adds another layer of absurdity. The DPA is a 1950 law, born of the Korean War, granting the president authority to compel companies to prioritize national defense production. It was invoked during COVID-19 to boost production of ventilators and masks.

Using it to force an AI company to remove safety guardrails from its software has no legal precedent. But the real paradox is this: the Pentagon was simultaneously considering compelling Anthropic to cooperate via the DPA and banning it as a national security risk. As Amodei noted, the two positions are mutually exclusive: either Claude is indispensable for national security (so requisition it), or it's a risk (so ban it). It cannot be both.

4. The Reaction from Silicon Valley and Washington

4.1 The industry (mostly) sides with Anthropic

A remarkable aspect of this story is that the AI industry, normally fractured into competing camps, largely aligned with Anthropic. Even direct rivals.

OpenAI's Sam Altman declared he doesn't "personally think the Pentagon should be threatening DPA against these companies" and shares Anthropic's concerns. He added: "For all the differences I have with Anthropic, I mostly trust them as a company, and I think they really do care about safety."

NVIDIA CEO Jensen Huang, whose company has invested in Anthropic, said he hopes for an agreement but added that "if it doesn't get worked out, it's also not the end of the world" since there are other AI providers for the Pentagon.

Google employees came out publicly in support of Anthropic, though Google itself, which has its own Pentagon contract, has not commented.

4.2 Congress divides

The Congressional reaction followed predictable fault lines, with some surprises.

Senators Ed Markey and Chris Van Hollen wrote to Hegseth that the Pentagon's threats "represent a chilling abuse of government power." Senator Mark Warner, ranking member of the Intelligence Committee, said Trump's directive "raises serious concerns about whether national security decisions are being driven by careful analysis or political considerations," and suggested the move could be "a pretext to steer contracts to a preferred vendor," a likely reference to Elon Musk's xAI.

Representative Valerie Foushee, co-chair of the AI Commission, called the Pentagon's pressure "deeply troubling."

Senior members of the Senate Armed Services Committee sent a private letter to both Anthropic and the Pentagon, urging them to find a resolution.

4.3 Palantir and the domino effect

A detail many analyses have overlooked: Anthropic's supply chain risk designation creates an immediate problem for Palantir Technologies, the surveillance company we analyzed in a previous Network Caffè article. Palantir uses Claude to power its most sensitive Pentagon work, including the Gotham and AIP platforms used for intelligence, operational planning, and, as CEO Alex Karp himself admits, to "make America more lethal."

If the designation is enforced, Palantir will need to quickly find an alternative to Claude for all its military contracts. A Pentagon official indicated that Grok, Elon Musk's AI, is "on board with being used in a classified setting," but it is widely considered less capable than Claude.

The irony? Palantir itself, which has never placed any ethical limits on the use of its technology for surveillance or autonomous weapons, now faces difficulties because its software depended on a company that did draw those lines.

5. The Fundamental Question: Who Decides How AI Is Used?

5.1 The historical precedent: Project Maven

For those in the industry, this story echoes a familiar tune. In 2018, Google withdrew from Project Maven, a Pentagon program using AI for drone image analysis, after thousands of employees signed an internal letter stating: "We believe that Google should not be in the business of war."

The difference between 2018 and 2026 is the level of escalation. Then, Google withdrew voluntarily and the Pentagon found other providers without drama. Today, a company trying to maintain ethical limits is branded an enemy of the nation.

The message is clear: in 2018, an AI company could say "no" to the Pentagon without consequences. In 2026, saying "no" means being treated like Huawei.

5.2 The democratic dilemma

The Pentagon's position, stripped to its essence: "Once we buy a product, we decide how to use it. We have our own procedures and standards."

Anthropic's position: "Laws haven't caught up with AI capabilities yet. What is technically legal today can be incompatible with democratic values, and current models aren't reliable enough for certain applications."

Both positions have their logic. The problem is they collide on terrain where clear rules don't yet exist. As Gregory Allen, senior advisor at the Center for Strategic and International Studies, observed: "The user base within the Department of Defense loves Anthropic, loves Claude, and says that their restrictions on usage, at least from the conversations I have been having, have never been triggered."

In other words: Anthropic's two clauses have never created a concrete operational problem. The Pentagon is fighting for the principle of unlimited access, not for a demonstrated operational need.

This makes the clash even more significant. This is not a case where Anthropic's restrictions prevented a military operation. It's about the fundamental question: does a private company have the right to set ethical limits on the technology it sells to the government? And if so, does the government have the right to punish it for doing so?

5.3 The gray zone

Pentagon spokesperson Sean Parnell stated the Department "has no interest" in using AI for fully autonomous weapons or mass surveillance, noting the latter is already illegal. If so, why not agree to put it in writing?

The answer lies in the "gray areas" the Pentagon itself cited as reason for rejecting the clauses. What counts as "mass surveillance"? Is the legal purchase of geolocation data on millions of Americans from data brokers mass surveillance? Today, probably not in a legal sense. But if that data is processed by an AI capable of automatically reconstructing every individual's movements, habits, and associations, the answer might change.

The same ambiguity applies to autonomous weapons. A drone with AI that identifies targets and presents them to a human operator for approval is a semi-autonomous weapon, and Anthropic doesn't oppose its use. But where does semi-autonomy end and full autonomy begin? When the time between target selection and human approval shrinks to seconds? When an operator approves 50 AI-generated targets per hour without time to evaluate each one individually?

These are not theoretical questions. They are the questions that will define 21st-century warfare. And right now, a San Francisco company is the only one insisting they be addressed before proceeding.

6. The Legal Implications: Uncharted Territory

6.1 The supply chain risk designation

The most devastating weapon in the Pentagon's arsenal isn't the contract cancellation, as $200 million is manageable for a company valued at $380 billion. The real weapon is the supply chain risk designation.

If applied broadly, every company doing business with the Pentagon, and indirectly with any federal agency, would need to certify they don't use Anthropic technology. This would hit not just Anthropic's military contracts but potentially its entire enterprise customer base: the very companies using Claude for coding, data analysis, and customer service who also hold government contracts.

Anthropic has contested this interpretation, arguing that the statute (10 USC 3252) limits the designation to Department of Defense contracts and "cannot affect how contractors use Claude to serve other customers." But in a political climate where the President threatens "major civil and criminal consequences," how many companies will want to test who's right?

Several legal experts have questioned the designation's legal basis. The statute requires a formal risk assessment and Congressional notification, requirements that don't appear to have been met. It also requires proof of a risk of sabotage or manipulation by an adversary, a condition difficult to sustain against a company that voluntarily cut off Chinese clients.

6.2 The legal battle ahead

Anthropic has announced it will challenge the designation in court. The case could become a landmark in American technology law, raising fundamental questions: can the government use national security designations as a punitive tool against companies exercising their contractual freedom? Can it compel a company to remove safety guardrails from its software?

But as the Fortune analyst observed, the clock works against Anthropic: "It will take years to resolve in court. And in the meantime, the business damage may already be done."

7. The Bigger Picture: AI as the New Arena of the Tech Cold War

This story doesn't happen in a vacuum. It sits within a context where artificial intelligence has become the primary front in geopolitical competition between the United States and China.

The Trump administration has repeatedly described the AI race as "equivalent to the space race during the Cold War." In this framework, any obstacle to military AI adoption is perceived as an advantage conceded to China.

But the paradox is glaring: punishing one of America's most advanced AI companies, potentially forcing investors like Google and Amazon to divest, potentially destroying the enterprise base that funds the research, is exactly the kind of self-inflicted wound that benefits China. As Gregory Allen at CSIS said: "To take a domestic AI champion at a time when the White House is saying that the AI race with China is equivalent to the space race: you do not want to take one of the crown jewels of your industry and light it on fire over something like this."

Senator Warner went further, suggesting the moves against Anthropic "pose an enormous risk to U.S. defense readiness and the willingness of the U.S. private sector and academia to work with the IC and DoD."

The risk, in essence, is that Anthropic's exemplary punishment achieves the opposite of its intended effect: not making AI companies more obedient, but convincing them that working with the Pentagon isn't worth the risk. As Lauren Kahn of the Center for Security and Emerging Technology put it: "I'm really, truly, honestly worried that private companies will say, 'It's not worth our time to work with the defense sector moving forward.'"

8. Questions That Remain Open

As of this writing, March 1, 2026, the situation is rapidly evolving. The unanswered questions are numerous:

Will the designation be formalized? Anthropic stated it had not yet received direct communication from the Pentagon or the White House. Axios reported that formal paperwork had not been initiated at the time of Hegseth's announcement on X. The legal process required by law - risk assessment, Congressional notification, exhaustion of less intrusive alternatives - does not appear to have been followed.

What will the impact be on contractors? Companies like Palantir, Lockheed Martin, Boeing, and Anthropic's own investors (Amazon, Google, NVIDIA) will need to navigate uncertain legal terrain. The battle will be over interpretation: does the designation cover only the use of Claude in military contracts, as Anthropic argues? Or does it extend to any commercial activity with Anthropic, as Hegseth declares?

Will the OpenAI deal hold? If the Pentagon effectively accepted the same limitations from OpenAI, even if differently worded, it could find itself in the same position in the future. Unless those "same limitations" aren't quite so similar in practice.

Will Congress intervene? Several lawmakers from both parties have expressed concern. But the current composition of Congress makes direct legislative intervention unlikely.

Will Anthropic survive economically? Losing the $200 million contract isn't existential for a company valued at $380 billion. But if the broad interpretation of the designation holds, the erosion of the enterprise customer base could be devastating. An IPO, which seemed imminent, would become far more complex.

9. Conclusion: The Choice That Will Define the AI Era

This story is about a $200 million contract, but it's really about much more. It's about the relationship between democracy and technology. Between public power and private innovation. Between national security and civil rights.

Anthropic is not an idealistic nonprofit. It's a for-profit company valued at nearly $400 billion that wants to dominate the enterprise AI market. Its stand is not pure altruism, it's also a branding move that differentiates it in a market where every competitor has already yielded to the Pentagon's demands.

But that's no reason to diminish the substance of the choice. OpenAI and Google have removed prohibitions on military use from their policies, leaving Anthropic as the last major AI company to say "no." In an industry where consensus is the norm, dissent has value regardless of motivation.

The real question, the one this story forces all of us to confront, is more uncomfortable: do we want a future where the planet's most advanced AI can be used to automatically process millions of citizens' personal data without the safeguards an explicit contract would provide? Do we want weapons that decide who lives and who dies at processor speed, without a human pressing the button?

As Amodei wrote: "In a narrow set of cases, we believe AI can undermine, rather than defend, democratic values."

At Network Caffè, we believe that sentence deserves to be carved into the marble of the digital age. Not because Anthropic is perfect, no $380 billion corporation is. But because at a moment when power is asking technology to remove all limits, someone must have the courage to say: "No. Not everything that is legal is right. Not everything that is possible is desirable."

Henry Ford said that "real progress happens only when advantages of a new technology become available to everybody." The opposite of progress is when the advantages of a new technology become instruments of control over everybody.

This battle has just begun. Its consequences will define the relationship between democracy and artificial intelligence for decades to come.

It's up to us to decide which side we're on.

Bibliography:

Anthropic (February 26, 2026). "Statement from Dario Amodei on our discussions with the Department of War"

Anthropic (February 27, 2026). "Statement on the comments from Secretary of War Pete Hegseth"

Axios (February 27, 2026). "Trump moves to blacklist Anthropic's Claude from government work"

CNN Business (February 27, 2026). "Anthropic rejects latest Pentagon offer: 'We cannot in good conscience accede to their request'"

CNN Business (February 27, 2026). "Trump administration orders military contractors and federal agencies to cease business with Anthropic"

NPR (February 27, 2026). "OpenAI announces Pentagon deal after Trump bans Anthropic"

Fortune (February 28, 2026). "OpenAI sweeps in to snag Pentagon contract after Anthropic labeled 'supply chain risk' in unprecedented move"

CNBC (February 27, 2026). "Anthropic faces lose-lose scenario in Pentagon conflict"

TechCrunch (February 27, 2026). "Pentagon moves to designate Anthropic as a supply-chain risk"

CBS News (February 27, 2026). "Hegseth declares Anthropic a supply chain risk"

NBC News (February 27, 2026). "OpenAI strikes deal with Pentagon after Trump orders government to stop using Anthropic"

The Hill (February 27, 2026). "Anthropic to challenge Pentagon's supply chain risk tag"

Breaking Defense (February 27, 2026). "Hegseth labels Anthropic a supply chain risk"

ABC News (February 27, 2026). "Trump orders US government to cut ties with Anthropic"

Washington Post (February 28, 2026). "Pentagon's Anthropic fight reshapes its relations with Silicon Valley"

CNBC (February 27, 2026). "Pentagon-Anthropic AI standoff is real-time testing balance of power in future of warfare"

TechPolicy.Press (February 28, 2026). "A Timeline of the Anthropic-Pentagon Dispute"

Axios (February 27, 2026). "What Trump labeling Anthropic AI a supply chain risk means"

Rolling Stone (February 27, 2026). "Anthropic Defies Pentagon's Demands as Contract Deadline Looms"